To combat the lack of expressiveness in the avatar's face, you can also use the face puppet and/or face key features to animate. However, as I state in the video, there is always the opportunity to re-record dialogue after the fact and sync the animation afterward (it would probably take a lot of tedious work - but it is possible with iClone's animation timeline feature).

Of course, the "speech" voice leaves much to be desired, as does the lack of facial expressiveness. The text to speech feature for facial lipsync/animation, though quite rudimentary, produces fairly consistent outputs. I will also include some writing for context, after-the-fact thoughts, and reflections on the affordances of each technique.

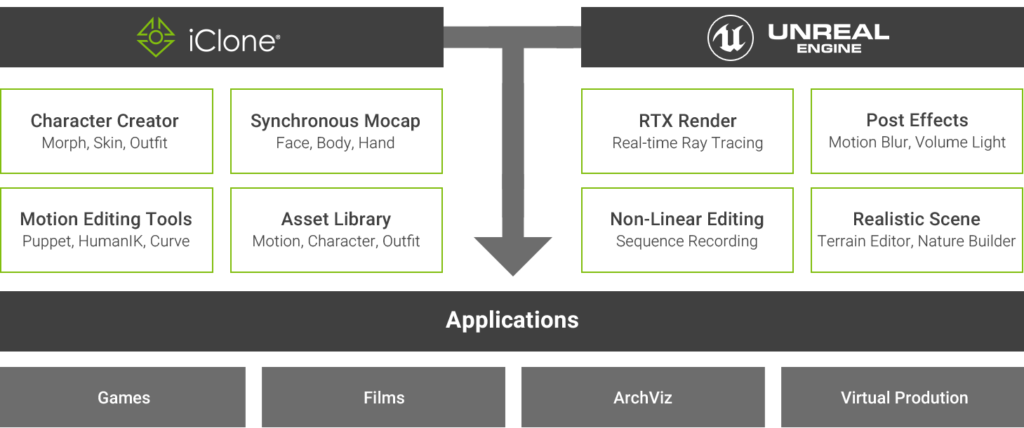

I had already watched tutorials on how to set-up and use each feature, but the videos below demonstrate a sort of "stream of consciousness" reaction to each facial animation feature. In order to accomplish this, I decided to take a different approach to my documentation methodology for this post - opting instead to live stream and record my reactions to each technique in real time as opposed to merely reflecting on the process after the fact. So that was my bias upon exploring these different techniques, however, I tried to remain neutral in my critiques of each feature. Working within an extensive self-teaching model, I have watched numerous tutorials on all the featured mentioned below and by and large, the LiveFace plug-in (mocap feature which uses Apple's TrueDepth Camera) came most recommended. First and foremost, I want to mention that I harbored a few biases going into this assignment. As someone who is, so far, working exclusively in iClone 7, I decided to document my trial and errors with a few of their facial animation techniques (whether manual features or motion capture plug-ins available as an iClone pipeline feature).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed